Journaling with AI: What Changes When the Page Talks Back

How talking to LLMs helps me hear myself think

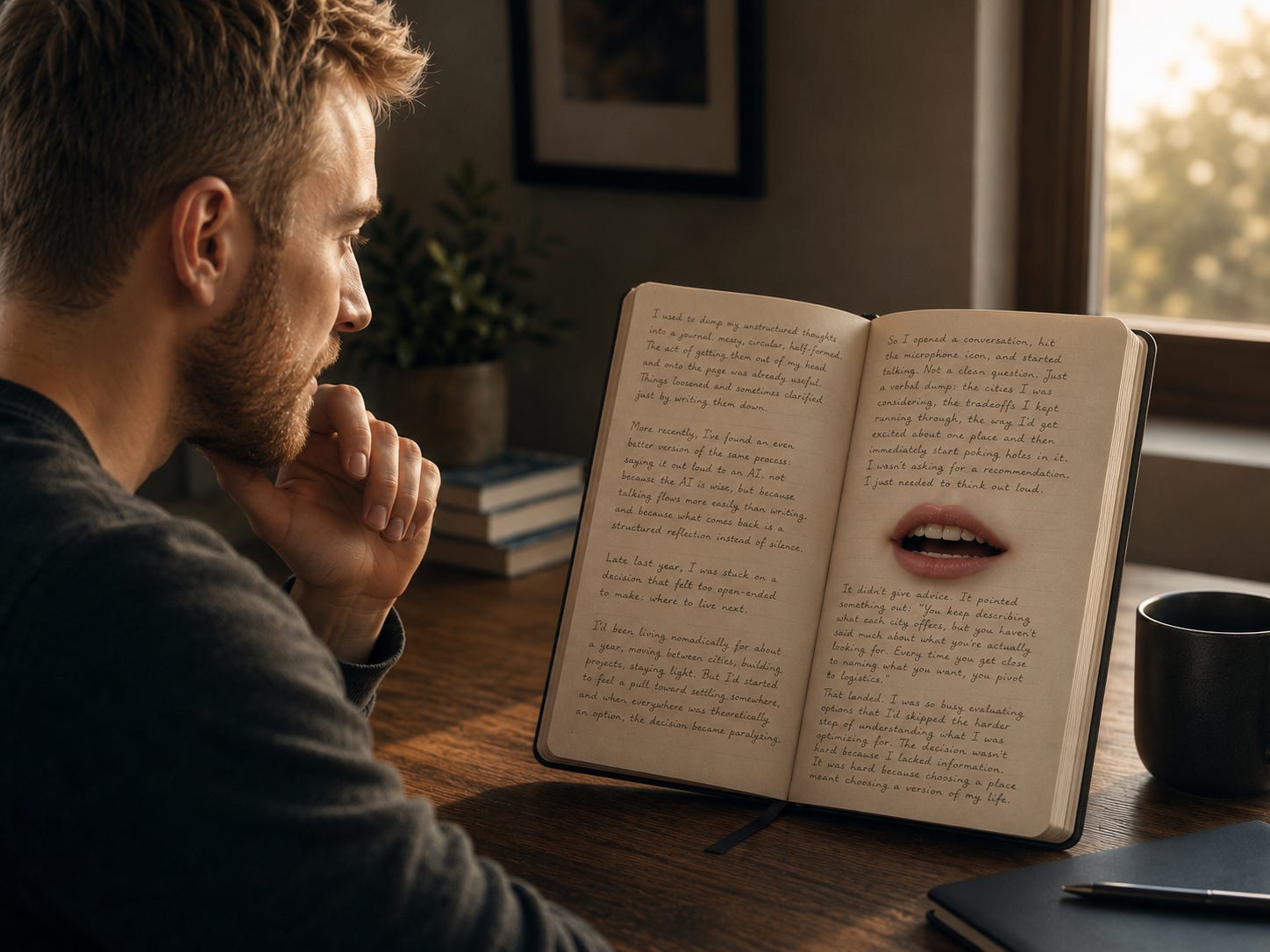

I used to dump my unstructured thoughts into a journal. Messy, circular, half-formed. The act of getting them out of my head and onto the page was already useful. Things loosened and sometimes clarified just by writing them down.

More recently, I’ve found an even better version of the same process: saying it out loud to an AI. Not because the AI is wise, but because talking flows more easily than writing, and because what comes back is a structured reflection instead of silence.

Late last year, I was stuck on a decision that felt too open-ended to make: where to live next.

I’d been living nomadically for about a year, moving between cities, building projects, staying light. But I’d started to feel a pull toward settling somewhere, and when everywhere was theoretically an option, the decision became paralyzing.

So I opened a conversation, hit the microphone icon, and started talking. Not a clean question. Just a verbal dump: the cities I was considering, the tradeoffs I kept running through, the way I’d get excited about one place and then immediately start poking holes in it. I wasn’t asking for a recommendation. I just needed to think out loud.

It didn’t give advice. It pointed something out: “You keep describing what each city offers, but you haven’t said much about what you’re actually looking for. Every time you get close to naming what you want, you pivot to logistics.”

That landed. I was so busy evaluating options that I’d skipped the harder step of understanding what I was optimizing for. The decision wasn’t hard because I lacked information. It was hard because choosing a place meant choosing a version of my life.

That, to me, is the real value of this kind of AI use. Not answers. Reflection.

There’s a concept in coding called rubber duck debugging. When you’re stuck on a bug, you explain the problem out loud to a rubber duck on your desk. No feedback required. The act of articulation often gets you unstuck.

The same principle applies here, but with an upgrade. AI makes the duck better.

It doesn’t just sit there while you externalize the problem. It reflects back patterns, contradictions, and implicit criteria you may not have named. In my case, it noticed that I kept talking about cities as bundles of features while avoiding the more revealing question of what kind of life I actually wanted. That is more than journaling. That is a reflection loop.

Most of the time, I’m not even typing. I’m talking out loud, stream of consciousness, half-formed sentences and all. That’s hard to do with another person. Even with close friends, there’s a social contract: be somewhat coherent, don’t ramble too long, don’t repeat yourself too much. With AI, you can be as messy and circular as you need to be, and somewhere in that messiness is often where the clarity lies.

The trick is not to ask for advice too quickly. Start with reflection.

Here’s what I’m thinking. What do you hear?

What feels inconsistent in this?

What am I optimizing for without quite saying it?

Those kinds of questions keep the center of gravity inside your own thinking. The AI is not deciding for you. It is helping you hear the decision already forming underneath the noise.

That distinction matters, because there’s an obvious counterpoint here: doesn’t this risk outsourcing thinking?

It can, if you use it lazily. If you ask the AI what to do before you’ve really said what’s going on, you can end up borrowing a conclusion instead of discovering one. But that’s not the use I’m describing. The value here is not in handing over agency. It’s in creating enough structure that you can see your own thoughts more clearly. Used well, it feels less like outsourcing cognition and more like building scaffolding for self-honesty.

That is also why the limitations of AI matter less here than people sometimes assume.

The AI isn’t actually understanding you. It’s pattern-matching on language. It has no inner experience of your dilemma, no felt sense of what you’re going through.

And yet the reflection can still be useful.

I think this points to something slightly uncomfortable: what we get from being understood may have less to do with the other party’s inner comprehension than we’d like to believe. Sometimes what helps is not empathy but structure. Empathy is valuable when you need comfort, witness, or emotional safety. Structured reflection is valuable when you need to expose contradictions, name implicit assumptions, or move a thought forward.

Those are not the same thing.

A good friend may be better at the first. An AI can be good at the second.

I noticed a related contrast from a Vipassana meditation course I did last year.

Vipassana asks you to observe experience without narrating it. You sit, notice sensation arise and pass, and resist the urge to interpret. The point is to encounter reality before concept, before story.

AI reflection does nearly the opposite. It takes raw experience and wraps it in language.

For example, in meditation I might notice a tightness in my chest and simply observe it, its texture, its temperature, the way it shifts. No story. In an AI conversation about that same feeling, I might say: “I think I’m anxious about committing to a place because stability has always felt like a trap to me.” One approach dissolves the narrative. The other examines it.

I’ve come to think they’re complementary. Vipassana helps you see what is happening in your body beneath your stories. AI reflection helps you examine the stories themselves.

The meditation practice also gives me a kind of quality control. When an AI reflection lands, I can feel it in my body: a settling, a recognition. When it is clever but wrong, I can feel that too. The point is not to trust the machine. The point is to get better at recognizing what rings true.

What this becomes, over time, is a practice of self-excavation. Instead of looking outward for answers, you use conversation to surface what you already believe, clarify your priorities, and notice the patterns in your own thinking.

The value isn’t that the AI knows you. It’s that it helps you hear yourself better.